The HathiTrust Digital Library is a massive collection of digital books: As of 2017, it contains 5 billion pages from 15 million volumes (7 million titles). About 40% of these are public-domain works, meaning anyone can search and read them. Some of these have been marked for their textual genre. Here I do a little verification of this mark-up based on my OED quotation dataset.

Some time ago a team led by Ted Underwood started work on automatic classification of the textual genre of HathiTrust books. Their interim report (2014) is worth reading (here), both for its discussion of the problem of genre and for its technical discussion of machine learning approaches. Basically what they did was have humans mark a bunch of pages of text for the genre represented on that page, then collected a bunch of data for each page (word counts and “structural features,” which I assume includes things like margin justification and information about the whole volume). Then the machine leaning bots got to work, trying to use the feature counts to predict the genre. The results were inspected and cleaned up, and datasets produced for three main categories: poetry, drama, and fiction (downloadable here).

I also have a biggish dataset: something like 56 million words in 2.44 million quotations, from about 164,000 authors (the number of discrete works is harder to say, because OED’s citation conventions vary so much). A large number of these quotations (about 2.1 million) have been marked up for textual genre by humans, including me and a number of trusted minions. So this ought to be a decent comparison set.

Here is what I did: using the Hathi genre metadata-sets for poetry, drama, and fiction, I did my algorithmic best to match up the Author/Title combinations there to OED’s Author/Work abbreviations. This was the hardest part, with the least satisfactory results, but with some manual inspection I was able to reduce the matches to only true positives. Since Hathi tags pages and we tag works (with categories for mixed-genre works), I also eliminated matches for books containing substantially more than one genre, and also works that Hathi predicted had very few pages of the relevant genre. I only counted each individual work once, even when Hathi had multiple editions or volumes (the fiction dataset is about 60% duplicates).

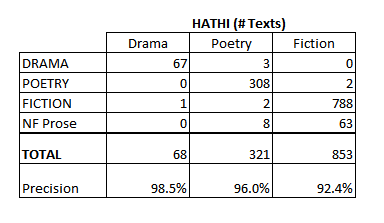

This resulted in 68 matches to Hathi texts marked as (mainly) drama, 321 mainly poetry, and 853 mainly fiction. How do Hathi’s genres match up with ours, you ask? Not too shabbily! Here’s an agreement table, with LOW/OED genres in four rows and Hathi’s in two columns:

Overall I’m pretty impressed and a bit surprised by the accuracy of the Hathi tagger. Of the titles that could be matched to works quoted in the OED, it correctly labelled essentially all the dramatic works, and did very well with both poetry and fiction (though slightly less well with fiction).

Overall I’m pretty impressed and a bit surprised by the accuracy of the Hathi tagger. Of the titles that could be matched to works quoted in the OED, it correctly labelled essentially all the dramatic works, and did very well with both poetry and fiction (though slightly less well with fiction).

When it did mislabel something, it was almost always to that “other” category, prose non-fiction. Many of these were personal memoirs, autobiographies, and histories mis-labeled as fiction, which is understandable. One can see why Hurd’s Moral and Political Dialogues, Hunt’s Lord Byron and Some of His Contemporaries, and Berkeley’s Life and Recollections might look a little like fiction to this bot. Emerson’s Essays, Barbour’s Florida for Tourists, Invalids and Settlers, however, are less obviously confused. Only a few of the mis-labeled Hathi texts were really puzzling: Siluria: A history of the oldest fossiliferous rocks and their foundations, for instance, by Roderick Murchison, which the classifier thought contained something 70 pp. of verse, for some reason.

NB. The results above are actually even better than the classifier’s own self-accuracy measurements, I assume as a result of my title-matching methodology. Here is one of Underwood’s confusion matrixes from his report, with machine-predicted values running in columns and actual hand-tagged values running in rows (I have removed a fifth category from the columns, “paratext”, which isn’t relevant):

These are numbers for words, and not pages or works. I disagree with this choice, since it will under-weight poetry and drama false-positives by a significant percentage, because prose tends to fit many more words on one a single page. So I think the two prose precisions may be a bit inflated, which may account for why fiction is the only genre for which I disagree more with the model than it disagrees with itself (you know what I mean). On the other hand, my elimination of duplicates probably drove down the precision by a fair amount as well.

These are numbers for words, and not pages or works. I disagree with this choice, since it will under-weight poetry and drama false-positives by a significant percentage, because prose tends to fit many more words on one a single page. So I think the two prose precisions may be a bit inflated, which may account for why fiction is the only genre for which I disagree more with the model than it disagrees with itself (you know what I mean). On the other hand, my elimination of duplicates probably drove down the precision by a fair amount as well.

No Comments